In June of 2006, Christie’s auction house put the 1927 painting La Horde by artist Max Ernst on the cover of the catalog for their evening sale of Impressionist and Modern art. Typically the catalog cover is reserved for the most exciting work in the sale, and Christie’s was certain they had a clear winner with this Surrealist masterpiece. As they wrote in the May 2006 press release leading up to the sale:

Leading the Surrealist section of the sale is La Horde, 1927 (estimate £2,500,000-3,500,000), one of the finest examples from a small series of strange and powerful paintings by Max Ernst, made with his then newly discovered grattage technique. “This exciting discovery is the most important work by Max Ernst to appear at auction in at least a decade,” said Olivier Camu, co-head of the evening sale.

There was only one problem… the “most important work by Max Ernst to appear at auction in at least a decade” was actually created by notorious art forger Wolfgang Beltracchi.

Wolfgang Beltracchi in studio

Someone did a pretty good job of burying Christie’s information on this embarrassing sale, but after a fair amount of detective work, I was able to track down a link to the press release and a low-res image of the catalog on Pinterest.

To be clear, I don’t think Christie’s was being negligent. I am sure they spent top dollar on due diligence to authenticate La Horde before bringing it to auction in such a bold and public way. The problem is human connoisseurship is more fallible than most in the art world want to believe or admit.

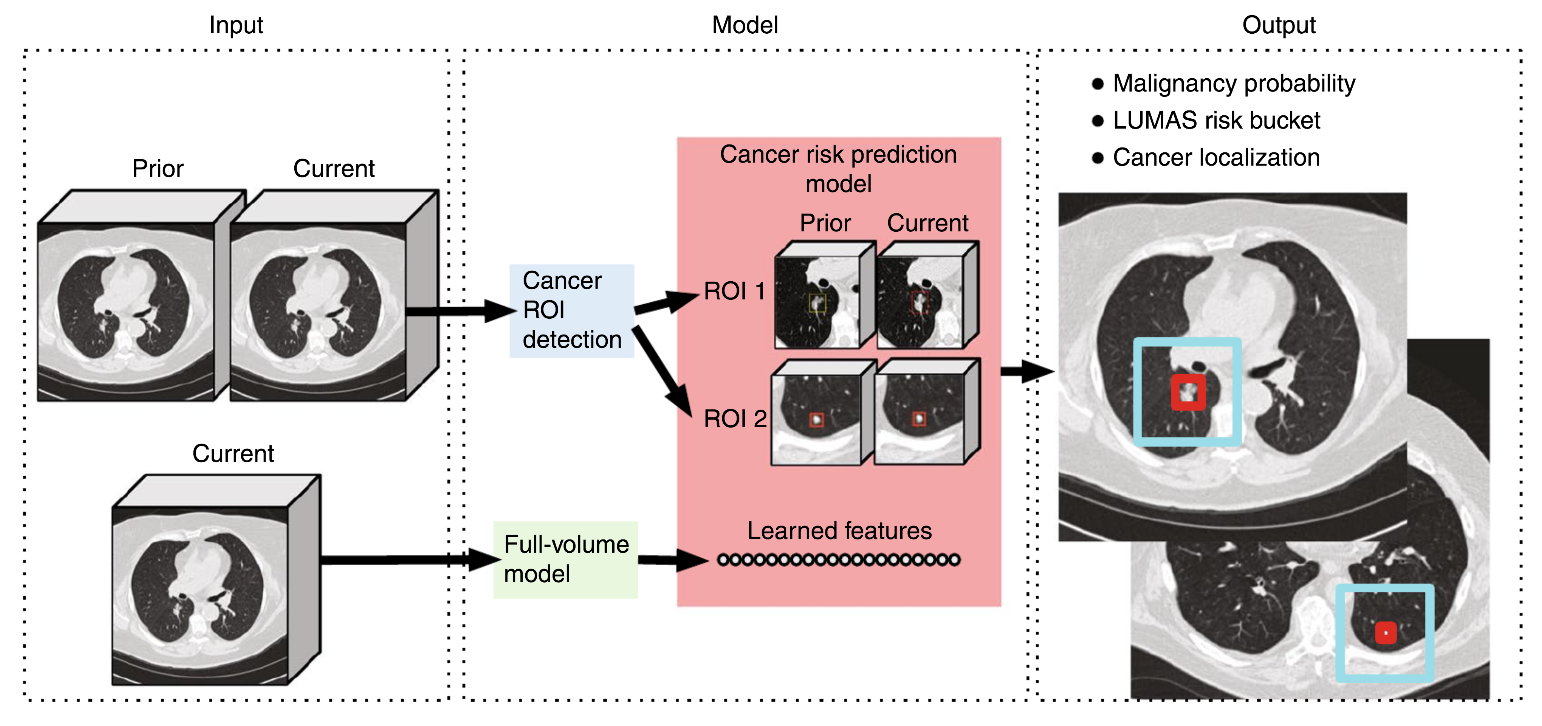

Other industries relying on human expertise and visual acuity for high-stakes decision making are realizing that AI can improve outcomes. For example, new research from Google published in the journal Nature Medicine last May shows that artificial intelligence is “as good or better than doctors at identifying lung cancer in CT scans”.

Credit: Nature Medicine Overall modeling framework.

The question is, can artificial intelligence be “as good or better” than art authentication experts in detecting that paintings like La Horde are forgeries? The answer is yes.

Two women in Switzerland, Dr. Carina Popovici and her co-founder Christiane Hoppe-Oehl, have developed an algorithm that correctly detected La Horde and several other known fakes using just a single photograph of the artworks in question. Their solution, the Art Recognition algorithm, looks at brushstrokes and produces an easy-to-read heat map that pinpoints which areas of the painting are most suspect. Their neural network is trained using machine learning and a comprehensive set of images of the artist’s works.

Art Recognition cofounders Christiane Hoppe-Oehl and Dr. Carina Popovici

Fairly skeptical and armed with a long list of technical questions (from myself and others), I set up a call with Dr. Carina Popovici to get a better understanding of how the Art Recognition algorithm works. Dr. Popovici was gracious, patient, and actually rather excited to have some challenging technical questions to address. By the end of the interview, I had nothing but admiration and awe for the impressive work the Art Recognition team has done in developing a scalable solution for attacking the world’s art forgery crisis.

Convinced others will feel the same, I am sharing my full interview with Dr. Popovici below.

Fighting Art Forgery With Machine Learning: An Interview With Dr. Carina Popovici

Art Recognition cofounder Dr. Carina Popovici

Jason Bailey: I love interviewing people working at the intersection of art and tech because they always have really interesting backgrounds. I’m always curious to learn if they started with a strong interest in art and migrated towards tech or the other way around. Please share a bit about your background with us. When did you start getting interested in art? Which artists are your favorite? Have you always been technically inclined?

Carina Popovici: Indeed, I have always been technically inclined. Back in my home country of Romania, I went to a high school with strong focus on math and physics. I was fascinated by the physics of elementary particles, which later also become my main topic of research. At the same time, I always had a strong interest in arts, not just visual, but also literature and theater. I mostly like Surrealists – Dali is my favorite, but in recent years I discovered Max Ernst, whom I find quite amazing.

Another artist I admire is Modigliani, also because of his turbulent life and tragic death. Although strictly professionally, Modigliani is probably not the best choice, being as he is the most forged artist of modern times.

Portrait of a Woman, by the Modigliani forger Elmyr de Hory, circa 1974

JB: How did you and your co-founder Christiane meet and decide to start a company together to detect art forgery?

CP: We met at Switzerland’s largest bank where we worked together for a while. Our career paths have split, but we stayed friends. And when the idea of an AI program to detect art forgeries eventually emerged, we discussed it thoroughly together. In 2017, I started working on the algorithm full time, while Christiane followed one year after. Meanwhile, it became clear that the program has a great potential and there is a huge interest on the market, so at the beginning of 2019 we decided to take the plunge and start a business here in Zurich.

JB: Your academic background is in particle physics. How does this translate to using machine learning to identify forgery in art?

CP: We have a lot of practical experience in applying mathematical/machine learning (ML) models on large data sets. The problems that we’ve worked on across the years are of very different natures, yet they all require the capacity to translate a specific problem (which can be in physics, finance, and now art) into a mathematical model and then find the right method to solve it. What is also common to all applications is the ability to find ways to handle data which are not as good as you would wish them to be. You need to continuously improve the models and use your creativity to make them solve the issue at hand. Also, neural networks were already a hot topic 15 years ago, but only in recent years new algorithms and computing power which can handle the large data at a sufficient pace became available.

JB: How does the actual Art Recognition algorithm work? What is the model actually looking at in the artwork? Are there aspects of it that are essentially a black box, or are you able to understand all aspects of the analysis?

CP: The algorithm is based on a deep convolutional neuronal network which we train to “learn” the characteristics of an artist from a set of original artworks by that artist. Once the training has been completed, the algorithm has learned with extremely high precision the characteristics of that artist.

When a new, previously unseen artwork is being analyzed, the same type of features are collected and compared to the already stored one. If they match, the new image is labeled as original; otherwise, it is a fake. Probabilities to distinguish original from fake can be higher than 90% depending on the style and artist, and we are continuously working on improving accuracy.

The black box character of AI algorithms is, of course, very well known. We as developers do not wish to bias the training. Therefore, we allow the network to choose itself the relevant features; the only feature which we give as “input” is the brushstroke. This comes at a cost, as it is not easy to find out what features have actually been learned. So we came up with the idea of producing a heat map which is meant to provide a visual interpretation of the decision process.

Beltracchi fake in the style of Max Pechstein

Art Recognition heat map highlighting areas of concern

JB: What advantages does Art Recognition’s approach have over traditional art authentication and forgery detection tools/approaches?

CP: One advantage is that the analysis can be run based on images alone - no transport of the original artwork is necessary. Another advantage is the speed, as we deliver our result in just a few days or even hours, whereas traditional committees sometimes need months. The method is not invasive; one doesn’t need to remove samples from the artwork, as it is done for chemical analyses. Finally, we believe that the objectivity provided through the computer is also an advantage, especially in cases where experts disagree and have long-lasting disputes.

JB: A combination of cloud computing and open-source machine learning platforms like Tensor Flow have made it easier than ever for anyone to use machine learning. What does Art Recognition do that other people could not do on their own with these freely available ML models? What is your secret sauce, your competitive edge?

CP: We have been working on the algorithm for roughly three years now, continuously improving it and taking our experience from other fields into account. The algorithm is specifically streamlined for art authentication, and it includes a number of technical tweaks for that purpose. It works flawlessly and delivers very high accuracy. By now we have tested it on many known forgeries, we ran blind tests with art experts, and we are now working closely with art experts to fine tune the quality of the algorithm even more.

From a non-technical side, we have invested a lot of time into building up an image database of high-quality images of original artworks, as well as a trusted network of art experts and advisors.

Dr. Carina Popovici at work refining the Art Recognition model

JB: What materials are you using to train the model? Are you using transfer learning or have you trained your models from scratch?

CP: The input data are good quality photographic reproductions of artworks by that artist. We only use images from trusted and verified sources such as catalogue raisonnés or museum databases. Ideally, the images should be of high resolution in order to make finer details available to the algorithm. If the resolution allows it, we generate several patches per image, thus augmenting the data set and making sure that no information is lost.

We use a convolutional autoencoder as a feature extractor. The autoencoder is trained with mean pixel-wise reconstruction loss, but we also add some terms to the loss function adapted from style transfer. The piece coming from transfer learning has the explicit purpose of capturing the main brushstroke characteristics. All other features are trained from the scratch.

JB: What type of information do you provide your clients with after the analysis is run?

CP: We provide a technical report showing the result (original/fake) together with the probability, and a heat map showing the main features which led to the decision. We also include a detailed explanation of our method for their reference.

Close up of an Art recognition heat map

JB: How accurate is the analysis you are running? What kind of testing have you conducted to validate your models? Have you been able to test known forgeries against your model? If so, how did they perform in those tests?

CP: Before launching the actual training, we split our original data set into a training and validation set. The paintings are split randomly, with 80% of images kept for training, and 20% for validation. The validation data set has the important role of providing an unbiased evaluation of the trained model, as no painting in the training set appears in the testing set, and vice versa. The validation accuracy is in most cases above 80%.

By now we successfully tested the model on several known forgeries by Wolfgang Beltracchi and Otto Wacker, and on the Lost Ales Sketchbook by Van Gogh (generally held to be a forgery). We also ran blind tests on several artists, for example, Cezanne or Daumier. In all cases, the results were as expected.

'Still life' in the style of Cézanne by forger Tom Keating

Art Recognition heat map highlighting areas of concern

JB: Some folks have suggested to me that subdividing images is good for capturing low-level features like strokes, edges, shadows, shapes, etc. But the downside to this approach might be that you lose the overall structure of the painting when you split it up. How do you think about this balance?

CP: We agree with this. That is why we include in the training both the patches, which capture the low-level features as well as the images as a whole which contain important information about the composition. We have even noticed that for some artists such as Manet, this type of overall structure is at least as important as the details of the painting.

JB: Does your approach tend to work better for certain types of artworks (drawings vs. paintings) or certain artists (Realism vs. Abstract)?

CP: So far, we have concentrated on paintings and only analyzed a few drawings, also because we mostly got requests on painting. We are working on enhancing the model to better handle drawings.

In terms of artists, the algorithm tends to work better on Impressionists, the reason being that this style is very rich in structure and brushstrokes are mostly well defined. But we are also happy with the results on other styles (Post-Impressionism, Expressionism). We actually analyzed only one painter with elements of Abstract art (Auguste Herbin). It worked very well but it is probably not enough to make a general statement.

Auguste Herbin, Composition sur Fond Vert - 1942

JB: I imagine that the ratio of size of the actual paintings to the digital images varies across your data set. This means some images may have a high level of detail while others may not. Do you have any concerns that this could inadvertently introduce bias?

CP: We do train on paintings of very different sizes, some larger and some very small. Our approach is to scale and crop all the images (both patches and full paintings) to equal size before feeding them into the neural network. While this doesn’t solve directly the problem of actual vs. digital size, it does make the analysis thoroughly consistent. The different levels of detail are blended together in the learning process, and that is perfectly okay if we are satisfied with the validation accuracy.

JB: I am fond of saying that data and analysis are only as good as your ability to communicate the results. I love your heat maps because they are so intuitive and easy to understand. Can you share a bit more about how you are generating them?

CP: Thank you. The heat maps are generated by evaluating the “importance” of each pixel in the decision process – the technical word for it is “weighted evidence.” In practice, this is done by removing a small number of pixels, replacing it by the average of the environment, and recalculating the overall class probability with this modified input. By comparing this new probability with the original class probability, one can check what is the influence of this group of pixels on the probability value. The process is repeated by going through the whole image and storing the modified probabilities. These variations result in different patterns and are being assigned different colors.

The patterns emerging as explained above basically show what are the features that had the biggest weight in the decision process, the idea being to make the decision of the network more understandable for the human viewer. In general, heat spots occur in the regions which comprise more structure (corners, edges, shapes, etc.), but they can also appear on flat pieces where the brushstroke changes direction.

JB: Do you see Art Recognition as being robust enough to detect forgeries on its own or is this just the first step in the journey for collectors who want to authenticate work from their collections? If just the first step, what other steps do collectors take after you have analyzed a photo of the work in question?

CP: All tests have shown that the Art Recognition program and results are very robust. However, we think that a rigorous investigation, especially when it comes to very valuable pieces, should also include other aspects, such as provenance research. All the elements can then be put together to reach the final conclusion.

The Art Recognition results could be, for example, used by the collectors as a precheck before approaching an art expert, but also by the art connoisseurs themselves as an additional piece of evidence when delivering their expertise.

JB: What other scientific approaches and tools do you think best compliment Art Recognition’s machine learning-based detection?

CP: Chemical analysis of pigments or carbon detection can help to find out if the materials used (canvas, paint) are from a certain time period, but that is, of course, no guarantee that the artwork is actually original. In fact, it is known that skilled forgers have prepared pigments contemporary to the artist they forged and also used the canvas of ‘worthless’ paintings from which they stripped off the color.

One aspect which is very important for us is the quality of the images. The company Artmyn, based also here in Switzerland, is using high-tech scanners and cutting-edge technology to produce very high-resolution images. This kind of input would certainly help Art Recognition to deliver even better results.

JB: Do you see AI and machine learning eventually replacing human authenticators, or do you see AI/ML as a tool that will augment human connoisseurship?

CP: We see AI/ML as a helping tool to increase human connoisseurship. It can be especially helpful if experts disagree or they decided not to opine on authenticity anymore. Other situations where we could help are the cases where the authentication committees have dissolved or where simply no expert exists.

JB: The art world can be notoriously conservative and slow to adopt new technologies. How has your technology been received so far? What objections have surfaced and how are you dealing with them?

CP: More or less all the art specialist we have worked and spoken with were very enthusiastic about our service and gave us a lot of honest feedback. Every encounter which happened over the past three years has helped with improving our service.

There have been, of course, also a few situations where people showed doubt and were straightforwardly reluctant to the idea of bringing AI methods into art. As you say, these are the very conservative and rather old-school personalities. Our strategy in such cases is to simply demonstrate that it works. For example, on a painting which we analyzed, the expert was quite amazed to see not only that the result was correct, but also that the features highlighted on the heat map were matching exactly on his expectations.

We are currently working closely with art experts not only to ensure the product we provide is really useful for them, but also to gain credibility in the market.

JB: The world of authentication is very high stakes. A work being deemed authentic or forged can mean the difference between a painting being worth tens of millions of dollars or essentially nothing. Because of this, several authentication boards have been tangled up in lawsuits with angry collectors who disagree with their opinions. The Warhol authentication board famously ran out of money for this reason and had to disband. As a fledgling two-person startup just getting off the ground, how are you thinking about the potential for these issues?

Self Portrait that was central to the law suit leading to the shut down of the Warhol authentication board

CP: We are indeed aware of all of the legal issues which can potentially emerge. We also know that some art experts seem to move away from “authentication” for the reasons you mention. Yet because of the (occasionally) hostile view towards authentication, I think it is even more important to have at hand a tool which is completely objective, emotionless, and has no fear of lawsuits.

While we are continuously working on improving the accuracy, it is well known that, by its nature, no AI algorithm can be 100% certain. We ensure our clients that the analysis is done most carefully and with due diligence, but we also explain to them that we cannot provide 100% accuracy. The clients have, of course, the freedom to use the authenticity evaluation resulting from an algorithm in any way they find meaningful for their purpose, but we exclude all warranty on any further use of the technical report we provide to them.

JB: Many technical folks I have spoken to are suspicious that machine learning can correctly detect forgery based on images alone. You yourself have a Ph.D. in particle physics and your co-founder Christiane Hoppe has an advanced degree in mathematics. What are these other folks missing that the two of you see in the potential for this solution/approach?

CP: It would be interesting to know why are those folks suspicious. In any case, teaching computers how to model large amounts of data in a meaningful way is nowadays a global trend reaching out to all domains, and I don’t see why art — or art authentication, for that matter — should be an exception. When developing an algorithm for a problem which is a rather atypical, one should, of course, take into consideration the particularities of that problem. To give you an example, when preprocessing the images, we are careful to not cut them into patches smaller than the brushstroke and we make sure to remove effects such as light spots, shadows, etc. Our network architecture is also specifically designed for this type of image recognition. So I wouldn’t say that there is a big secret that people out there are missing, but rather many small details which should be embedded at every step of analysis in order to have it working properly.

Finally, the results alone should speak for themselves, as in all tests, the algorithm worked perfectly well.

JB: What is the long-term vision for Art Recognition?

CP: Our vision is that Art Recognition will become a trusted label and that every artwork coming up on sale has been checked by our algorithm.

JB: Why have you decided to partner up with Artnome?

CP: We are really thrilled to see that you are so enthusiastic about the same topics as we are, and it is great fun to team up and move the case forward together. Your network and images are a tremendous help for us. At the same time, we hope we can help you fulfill one of your dreams – bringing down forgery in the art market!

JB: How do people engage with you if they want to know if they have a forgery or an authentic work?

CP: For the time being, they can send us an e-mail with a photo of the artwork in question and their assumption on the artist. Our algorithm then analyzes the image, and within few days, we get back to them with a full report. We are planning to soon give the possibility to our clients to upload the images directly via our website.

Conclusion

We need to stop celebrating forgers as lovable rascals getting one over on rich collectors and start celebrating real heroes like the Art Recognition founders, Popovici and Hoppe-Oehl. The reality is that art forgers undercut our shared humanity by compromising the historical record of our most important cultural objects: Our art. I’m proud and excited to be both a partner and an advisor to Art Authentication and look forward to working with them to put an end to forgery in our museums on the art market in my lifetime.